Defense Industry Moves Quickly

Defense technology firms are rapidly distancing themselves from Anthropic’s Claude AI after the U.S. Department of Defense moved to blacklist the technology over national security concerns.

Several companies working on military contracts have instructed employees to stop using the AI model and begin transitioning to alternative platforms.

The shift reflects how seriously contractors interpret compliance rules tied to government defense work. Firms operating within the Pentagon’s ecosystem often move quickly to avoid even the appearance of regulatory conflict.

A Sudden Reversal

The development marks a dramatic turn for Anthropic.

The company had previously secured significant traction within government systems. Through partnerships with defense-focused software providers, its Claude model had been deployed inside classified networks under a major contract with the Department of Defense.

Enterprise customers represent the vast majority of Anthropic’s revenue, making government adoption particularly important.

Now, many defense contractors are proactively removing the technology from internal workflows.

Contractors Switching Models

Defense startups and contractors are reportedly transitioning to other AI providers or open-source alternatives while the dispute plays out.

Executives say the shift is being made primarily out of caution rather than dissatisfaction with Claude’s capabilities.

In fact, several industry insiders have acknowledged the platform’s strong performance in sensitive data environments — one reason it gained traction in defense systems in the first place.

But when government rules change, contractors rarely wait for full legal clarity before adjusting operations.

The Core Dispute

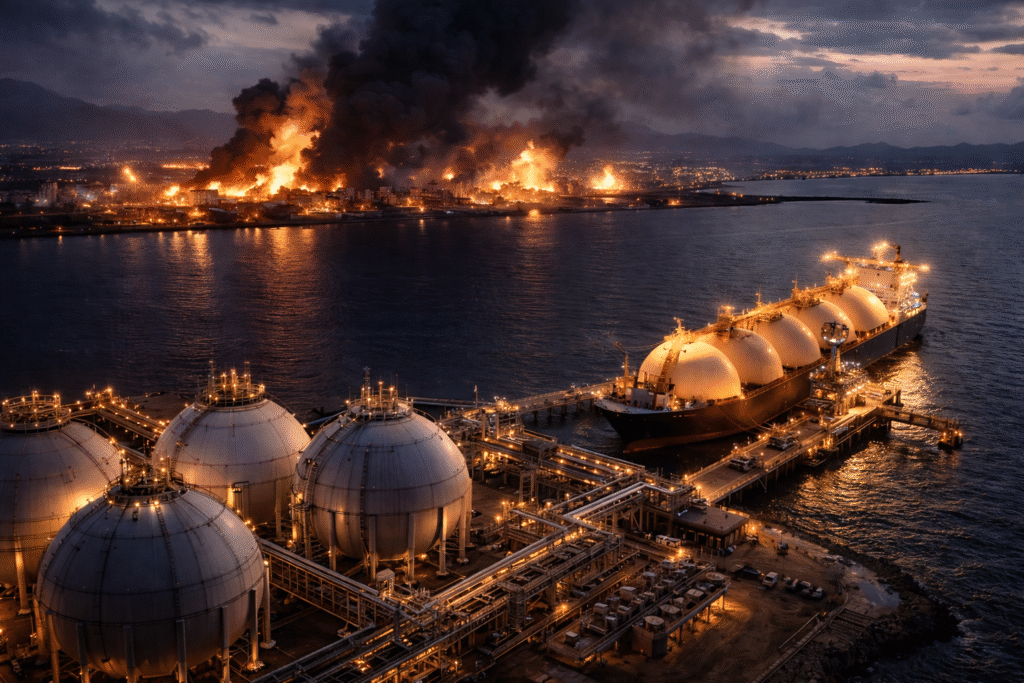

The standoff stems from disagreements over how AI models can be used within military environments.

Anthropic reportedly pushed for restrictions that would prevent its systems from being used for:

- Mass domestic surveillance

- Fully autonomous weapons systems

Government officials, meanwhile, sought broader operational flexibility.

The disagreement ultimately led to the Pentagon labeling the technology a potential supply chain risk and instructing contractors to phase it out.

Industry Impact

If the designation becomes permanent, it would apply specifically to defense-related use cases.

Companies could still theoretically use Claude for commercial customers outside of military contracts.

Still, the defense sector’s response shows how sensitive the supply chain has become as AI tools are integrated into national security infrastructure.

Replacing deeply embedded AI systems also requires time, engineering resources, and new negotiations with vendors.

A Competitive AI Landscape

Anthropic is not the only player operating in government AI.

The U.S. government also works with multiple providers across the AI ecosystem, including major cloud and model developers.

That competitive landscape means contractors often maintain relationships with multiple vendors to avoid dependency on a single technology supplier.

In practice, this flexibility makes rapid switching possible — even if it temporarily slows development.

WSA Take

The episode highlights a new reality in the AI industry: technology decisions are increasingly geopolitical.

For companies operating in defense or intelligence markets, regulatory alignment may matter just as much as technical capability.

Anthropic’s dispute with the government shows the tension between AI safety principles and military deployment needs.

Meanwhile, competitors are ready to step in.

The AI race is no longer just about building the best model.

It’s about who can operate inside the rules of national security systems.

Disclaimer

WallStAccess is a financial media platform providing market commentary and analysis for informational and educational purposes only. This content does not constitute investment advice, a recommendation, or an offer to buy or sell any securities. Readers should conduct their own research or consult a licensed financial professional before making investment decisions.