The AI Infrastructure Race Just Got Bigger

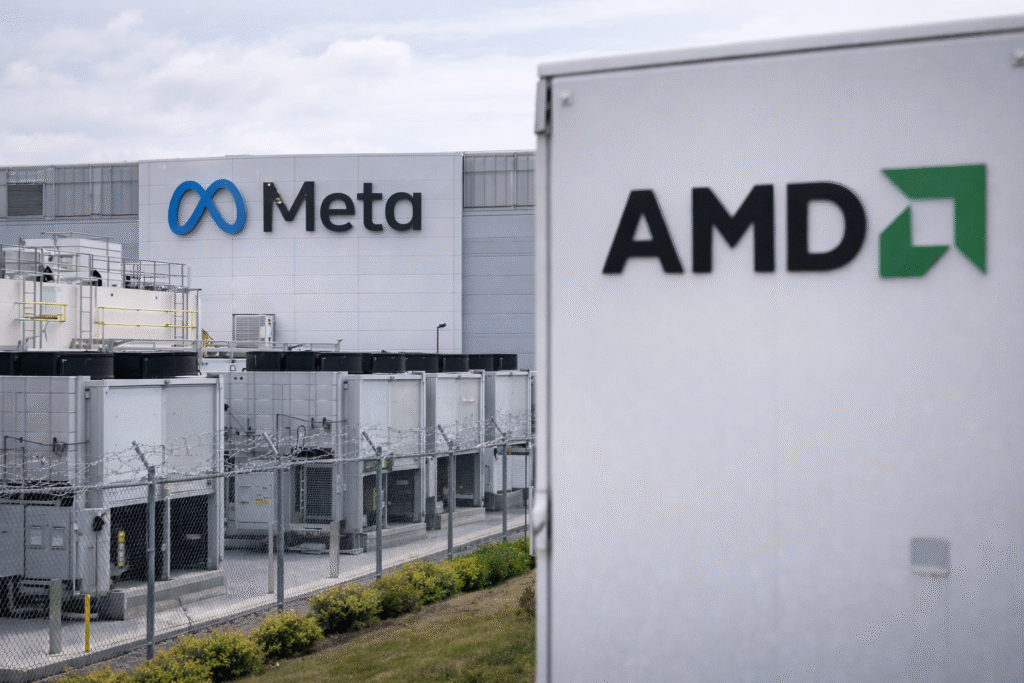

Meta and AMD have entered into a multiyear agreement that underscores just how massive the AI infrastructure build-out has become.

Under the deal, Meta will purchase up to 6 gigawatts’ worth of AI chips from AMD — a scale typically associated with power plants, not processors.

To align incentives, AMD will issue Meta 160 million shares of common stock, vesting in tranches tied to performance milestones. The first trigger: shipping 1 gigawatt of chips.

AMD shares jumped as much as 10% in premarket trading following the announcement.

What’s Actually Being Deployed

The first wave of GPUs will center around AMD’s MI450 line, integrated into its Helios rack-scale data center systems alongside EPYC CPUs. Deployment is expected in the second half of the year.

Meta will also purchase large volumes of AMD CPUs, including the Venice chip and the upcoming Verano processor — highlighting an important shift in AI architecture.

As AI moves toward inference and agent-based systems, CPUs are becoming more critical in handling model orchestration and workload management inside data centers.

Nvidia Isn’t Out of the Picture

This deal comes just days after Meta announced a separate multiyear agreement with Nvidia, which will supply millions of Blackwell and Rubin GPUs.

Meta is also expected to host one of the first large-scale deployments of Nvidia’s Grace CPU server systems.

The takeaway: Meta isn’t choosing sides. It’s securing supply.

In a market where GPU access can determine competitive advantage, hyperscalers are building redundancy into their AI stacks.

$135 Billion and Counting

Meta plans to spend up to $135 billion in capital expenditures in 2026, much of it directed toward AI infrastructure.

It’s not alone.

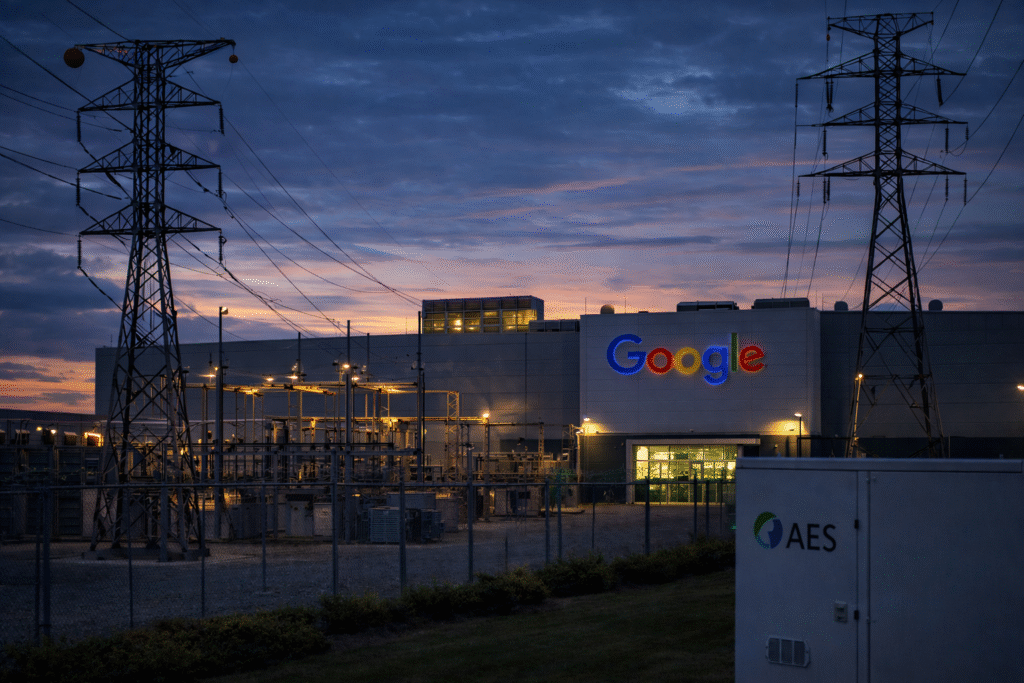

Meta, Amazon, Google, and Microsoft are collectively expected to spend roughly $650 billion on AI investments.

That level of capital deployment has sparked debate across Wall Street. Some investors question whether returns will justify the outlay, especially as AI monetization remains uneven across platforms.

Interestingly, Meta has held up better than peers following its spending announcement, while Amazon, Google, and Microsoft have seen more pronounced declines.

The Bigger Theme: Diversification Over Dependency

For chipmakers, this signals something important.

The hyperscalers are no longer dependent on a single GPU vendor. Even as Nvidia remains dominant, AMD is gaining meaningful share in AI infrastructure.

At the same time, concerns remain that custom chips from Amazon, Google, or Meta could disrupt the broader GPU market. But analysts largely believe replacing high-performance general-purpose GPUs will not be simple.

The compute demand curve is still rising.

And so is the competition to supply it.

WSA Take

This deal isn’t just about one customer or one chip line.

It’s about scale.

Meta is committing to power-plant-level GPU capacity because AI is no longer experimental — it’s infrastructure.

For AMD, this represents validation at hyperscaler scale. For Meta, it’s supply security in a market where access to compute equals competitive positioning.

The AI arms race isn’t slowing. It’s industrializing.

And the companies building the physical backbone of AI are becoming just as important as the models themselves.

Disclaimer

WallStAccess is a financial media platform providing market commentary and analysis for informational and educational purposes only. This content does not constitute investment advice, a recommendation, or an offer to buy or sell any securities. Readers should conduct their own research or consult a licensed financial professional before making investment decisions.